Durable Context: The prerequisite everyone building AI needs

Ivan Dwyer

Product Marketing, Axonius

Everyone is racing to capture more context. But are we asking whether the context they have is trustworthy enough to build on? In security, that's not a philosophical question and there’s no margin of error. It's the difference between a decision that reduces risk and one that compounds it.

In this article, I’ll cover durable context: a security data layer grounded in verified truths.

The context graph

For years, security tools acted as systems of record for objects: customers, employees, and operations. Static lists of assets that enable human analysis, understanding, and eventual action. But as we move toward a world of faster threat-response cycles and AI-driven security operations, 'lists' aren't enough. We need systems of reasoning that capture the institutional memory currently trapped in Slack threads and tribal knowledge. This is the promise of the context graph.

However, for cybersecurity, there is a fundamental hurdle: a context graph trained on bad data points can confidently lead you in the wrong direction. In most enterprises, EDR, CMDB, and Identity tools regularly disagree on who owns what and what's actually covered. Training an AI operator, or a human analyst, for that matter, on 'dirty' context leads to bad decisions. Acting faster only means failing faster, and self-learning feedback loops aggregate mistakes faster than we can correct them, further compounding risk.

This is what the context graph conversation hasn't addressed within a cybersecurity environment: before you can trust why a decision was made, you must be able to verify the conditions under which it was made.

What breaks context

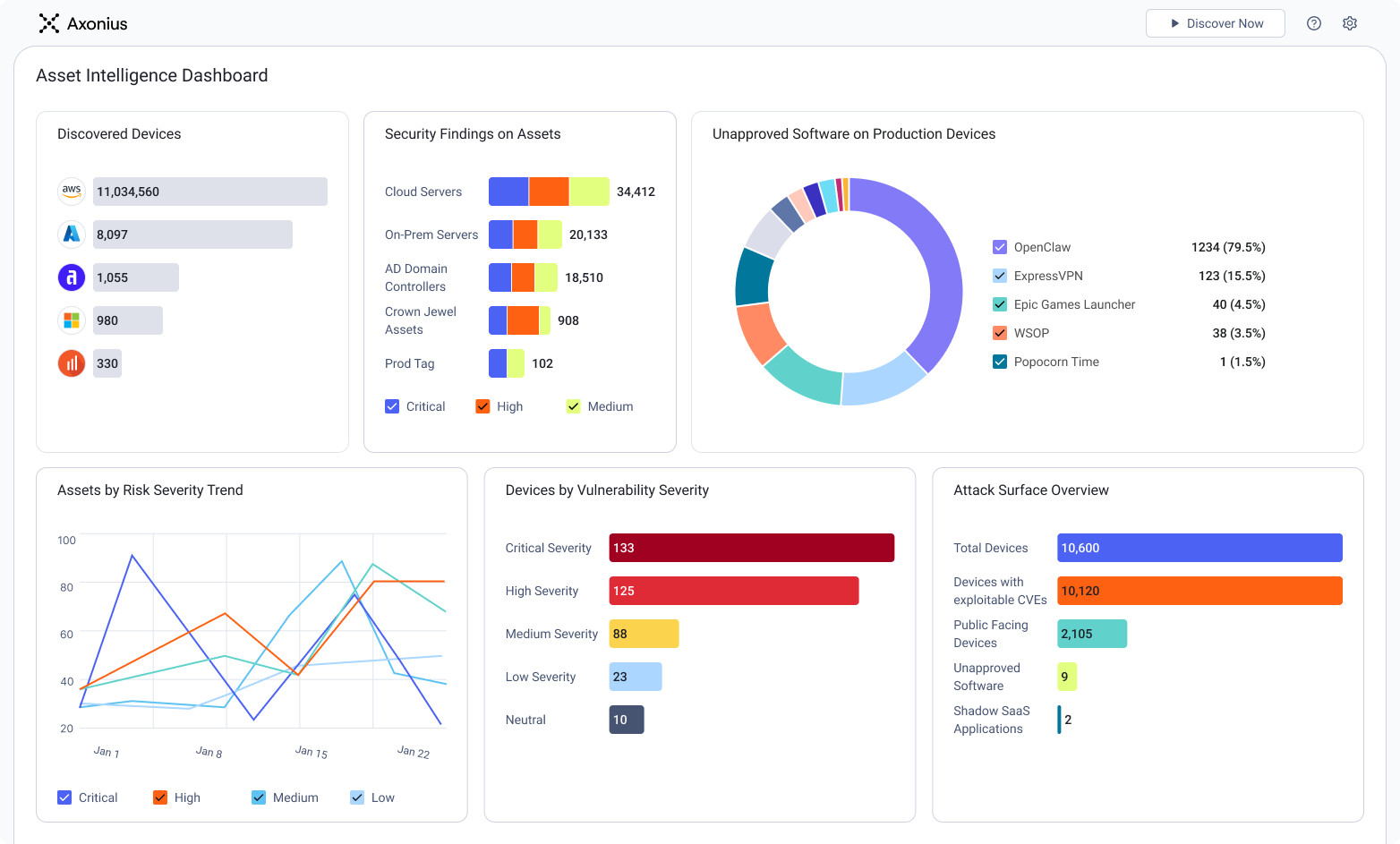

Every tool in the security stack generates context. EDRs report agent coverage. Scanners report vulnerability findings. CMDBs report ownership and configuration. Identity providers report artifacts and access. The stack isn't short on data; most organizations are drowning in it.

The problem is structural. Each tool reports from its own vantage point, bounded by what it can see, how recently it ran, and how it defines the assets it tracks. When tools describe the same real-world asset differently, the conflict rarely gets resolved. The contradictory versions sit in parallel.

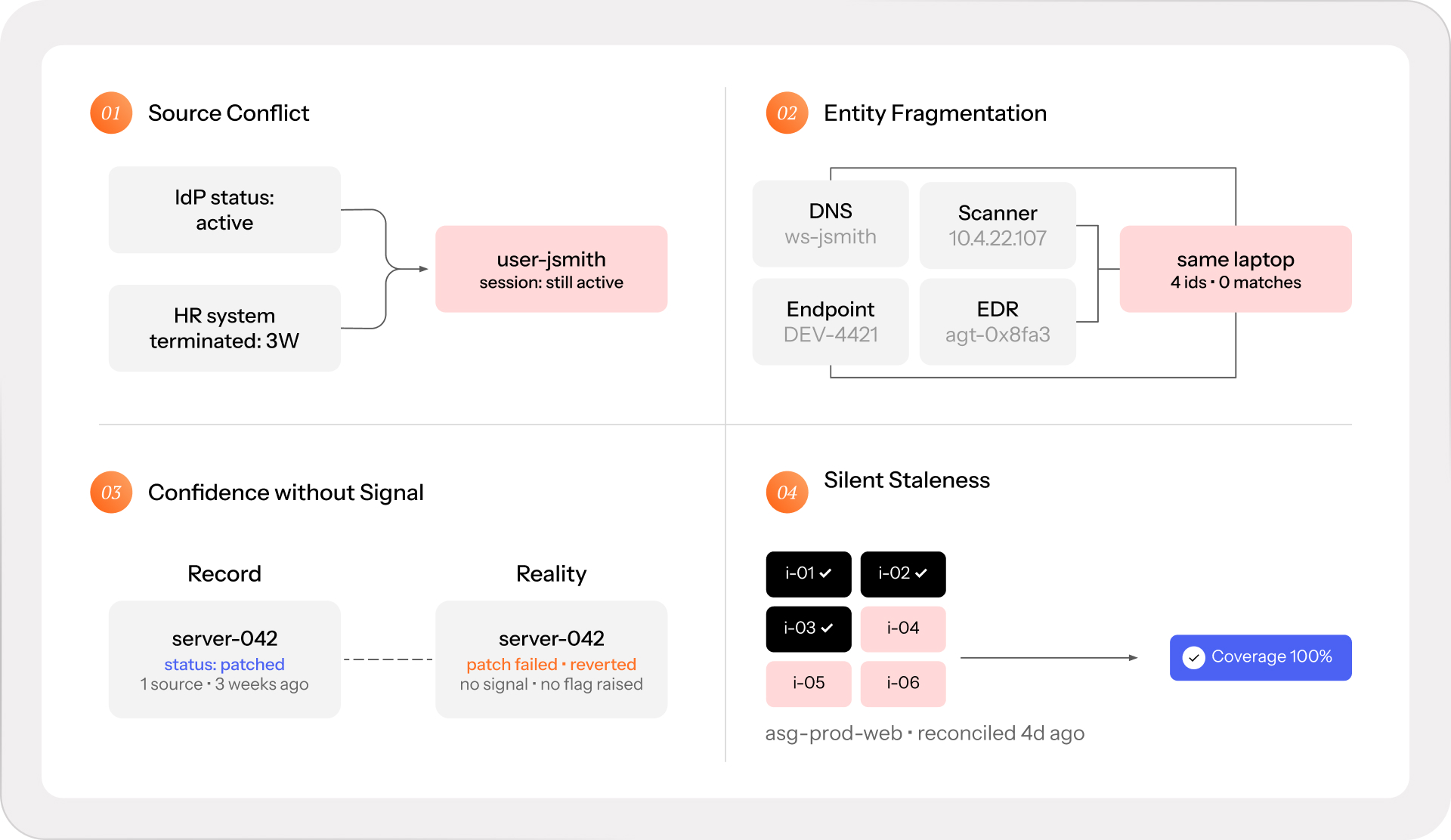

Raw context fails in a few ways:

Source conflict. Each tool writes its own version of truth without checking the others. The IdP shows an account as active; HR systems show the employee was terminated three weeks ago. An active session is still running. Neither system flagged the other.

Entity fragmentation. The same laptop appears as a hostname in DNS, an IP address in the network scanner, a device record in endpoint management, and an agent ID in the EDR. Without explicit logic matching these to a single real-world asset, cross-tool queries produce phantom gaps and phantom coverage in equal measure.

Confidence without signal. Nothing in raw context tells you which records to trust. A server shows fully patched from one scanner, last run three weeks ago. But the patch failed silently, and the server reverted. The record still shows as clean.

Silent staleness. Environments change faster than records do. An auto-scaling group added eight instances overnight, but did not install the right agent. The record shows the group as covered, which it was, when the record was written.

Aggregation alone doesn't fix this. Pulling data from dozens of tools into a single platform is the right starting point, but it's not the final destination. What turns aggregation into durable context is a reconciliation layer that deals with source authority, conflict resolution, confidence tracking, and drift detection. Without that layer, the unified view is only as reliable as the least trustworthy source feeding it.

Durable cybersecurity context

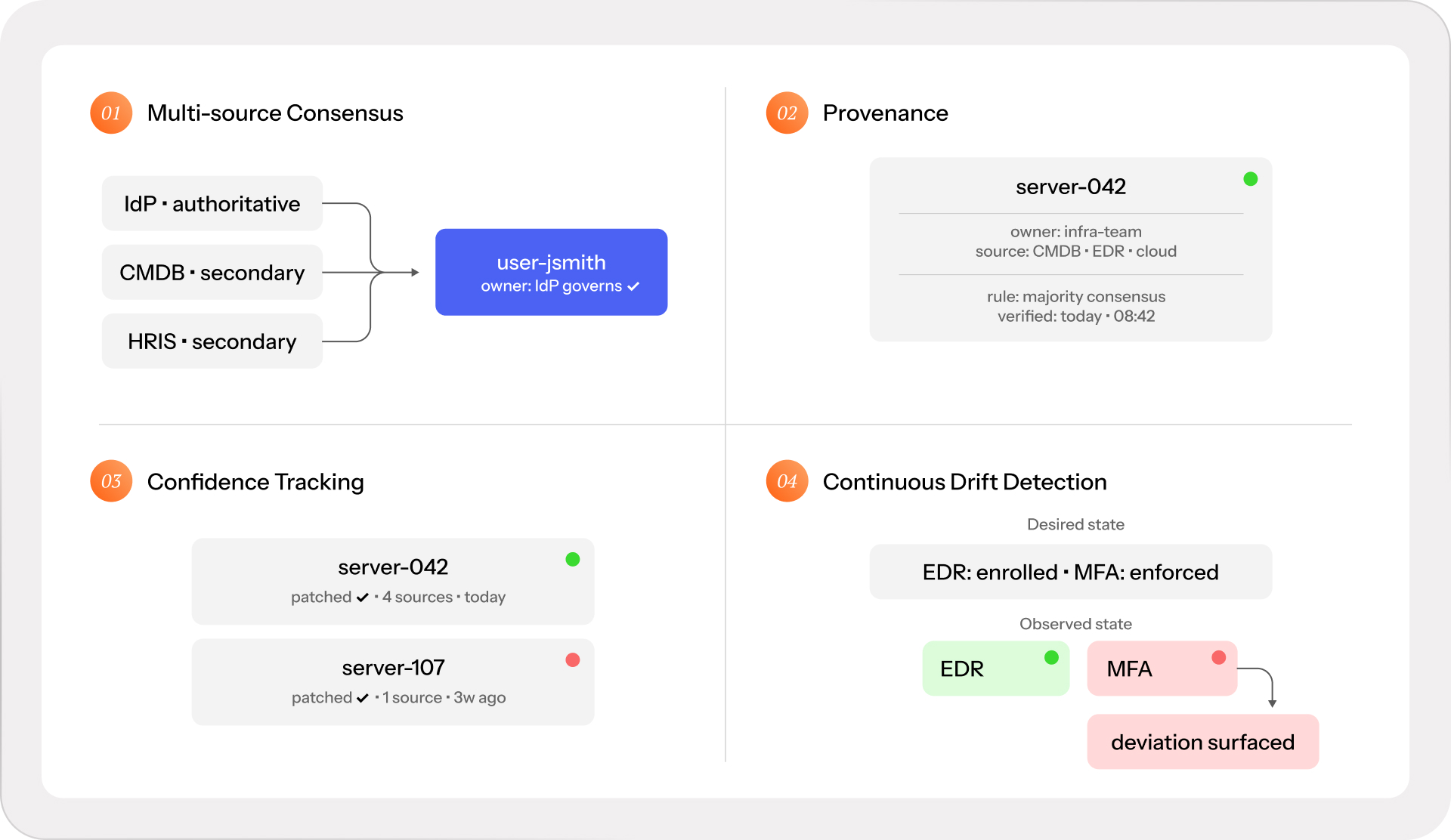

Durable context holds up as the environment changes — continuously reconciled across sources, with conflicts resolved before they propagate, confidence tracked at the attribute level, and drift detected the moment it happens.

Four properties distinguish it from raw context.

Multi-source consensus. An attribute isn't trusted until the reconciliation logic explicitly explains why one source overrides the others. Source authority is field-specific: the IdP is authoritative for identity attributes; the EDR is authoritative for agent health; the cloud console is authoritative for resource tags. When sources conflict outside their authoritative domain, the conflict is surfaced rather than silently resolved by whichever system wrote last.

Provenance. Every field carries a lineage: which sources contributed, which reconciliation rule governed the resolution, when it was last verified. This allows downstream decisions, and eventually agents, to assess whether the context they're acting on is strong enough to act on.

Confidence tracking. Invariants are binary, but context isn’t. It degrades on a known schedule when sources go quiet, when reconciliation cycles haven't run, or when contributing sources are known to disagree on a class of attribute. A record reconciled this morning carries more weight than one last verified three weeks ago, and that difference should be visible rather than hidden.

Continuous drift detection. The system knows what should be true — the desired state defined by policy — and continuously checks whether it still is. Deviation surfaces immediately, while it's still correctable, rather than at the next audit or the next incident.

Together, these properties are what make asset intelligence decision-grade. Decision-grade is the output bar: the standard the data has to meet before it's trustworthy enough to prioritize from, act on, and prove coverage with. Durable context is the reconciliation mechanism to meet this bar.

The architecture of durable context

Let’s return to the original thesis for a moment. Context graphs are valuable because they accumulate the reasoning behind decisions into something queryable: the exceptions, the precedents, the cross-system judgment. That accumulated context becomes the foundation for the next generation of agents, tools, and programs that can operate with genuine organizational intelligence rather than following static rules.

This thesis is immediately recognizable to us at Axonius. It describes, with precision, what decision-grade asset intelligence is building towards.

As we like to say: no one system of record is the one source of truth. Durable context requires reconciliation above the stack. Not a better CMDB, not a scanner with broader reach, not a consolidated identity provider. Only a genuinely independent platform of platforms. One that adapts to any stack, reads from every source, and reconciles what no individual system could produce on its own: durable context.

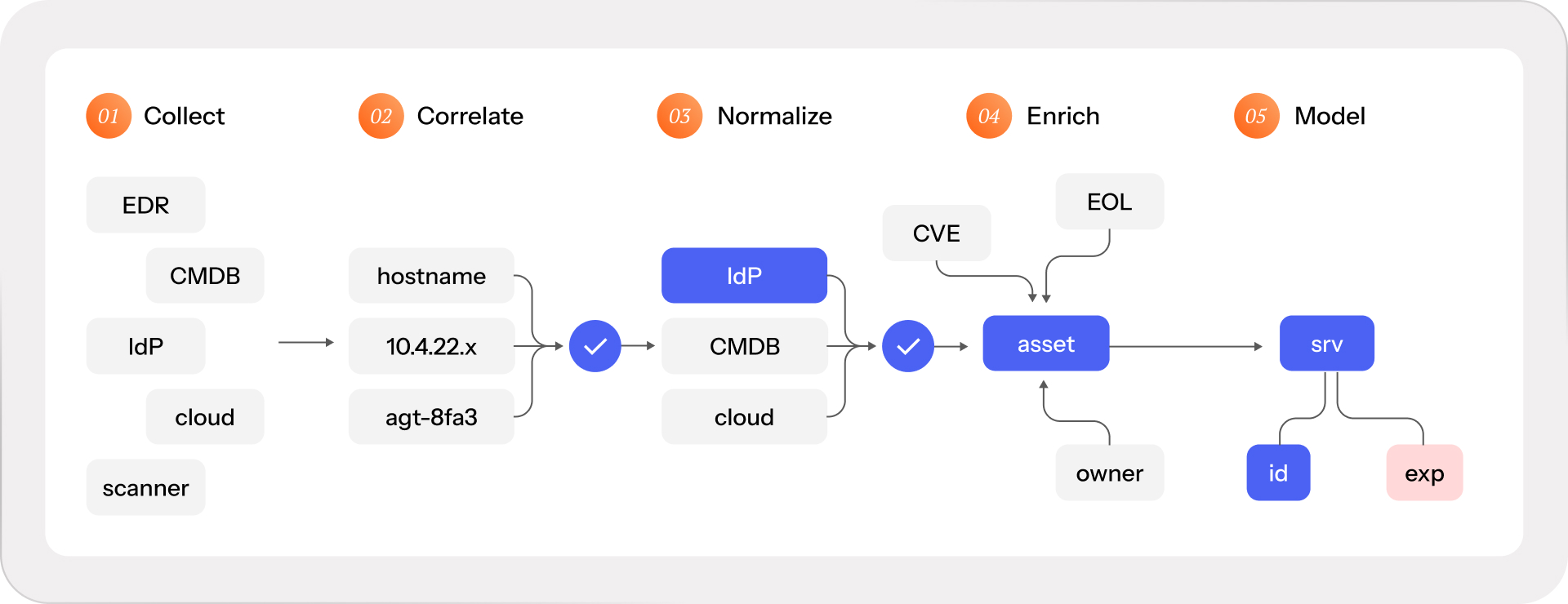

We’ve written about the inner workings of the Axonius asset intelligence pipeline before. At a high level, the stages are:

Collect. Ingest asset and exposure data through deep, bi-directional connections across security, IT, cloud, identity, SaaS, and infrastructure. Breadth is non-negotiable; partial source coverage produces partial truth regardless of how sophisticated the downstream stages are.

Correlate. Resolve entity identity across sources. A managed endpoint might appear as a hostname in DNS, an IP address in the network scanner, a device record in endpoint management, and an agent ID in the EDR. Correlation requires a field-aware engine that matches these representations to a single trusted profile. Getting it wrong propagates through every downstream stage.

Normalize. Apply schema-level logic and source authority rules to standardize fields and resolve conflicts. The IdP governs identity attributes. The EDR governs agent health. The cloud console governs resource tags. Last-write-wins is not a reconciliation policy; the normalization layer is what makes it one.

Enrich. Layer in external intelligence and internal context (CVE metadata, EOL signals, threat feeds, ownership, and criticality attributes) onto a verified foundation. The sequence matters: enrichment before correlation produces the appearance of depth over unresolved conflicts.

Model. Map relationships continuously across assets by type (ownership, access, control coverage, exposure linkage, dependency), forming a dynamic graph of the environment. Every node carries an observed state, desired state, and the delta between them. Every edge carries a freshness timestamp. It's the structure that makes cross-domain queries possible — queries that no single tool could answer.

The asset fabric is the operational substrate that the context graph thesis depends on. Decision traces need a verified account of what was true when a decision was made. Agents need a foundation they can assess for reliability before acting. Security programs need continuous proof that invariants hold.

All of it runs on durable context, and durable context requires a layer that the entire stack reports to. That’s asset intelligence.

What durable context actually enables

Let’s look at this through a concrete mechanism.

Every cybersecurity program is designed to operate as invariants, or conditions that must continuously hold. Every endpoint running a sanctioned EDR agent. Every privileged account behind MFA. Every critical application connected to SSO.

The challenge is that most invariants are stated as policies but verified as spot checks. A policy is a claim. Audit evidence is a sample. Neither is continuous proof that the condition actually holds across the full environment, right now, including the assets that have drifted since the last check.

Making an invariant hold requires three things:

defined scope,

a verifiable condition,

and a continuous evidence mechanism.

The scope question is where most programs break. An EDR console is a reliable source of evidence for the devices it manages, but structurally blind to the devices it doesn't. If the inventory defining scope lives inside the same system responsible for coverage, the gaps are invisible by design. The EDR can't report on what it can't see.

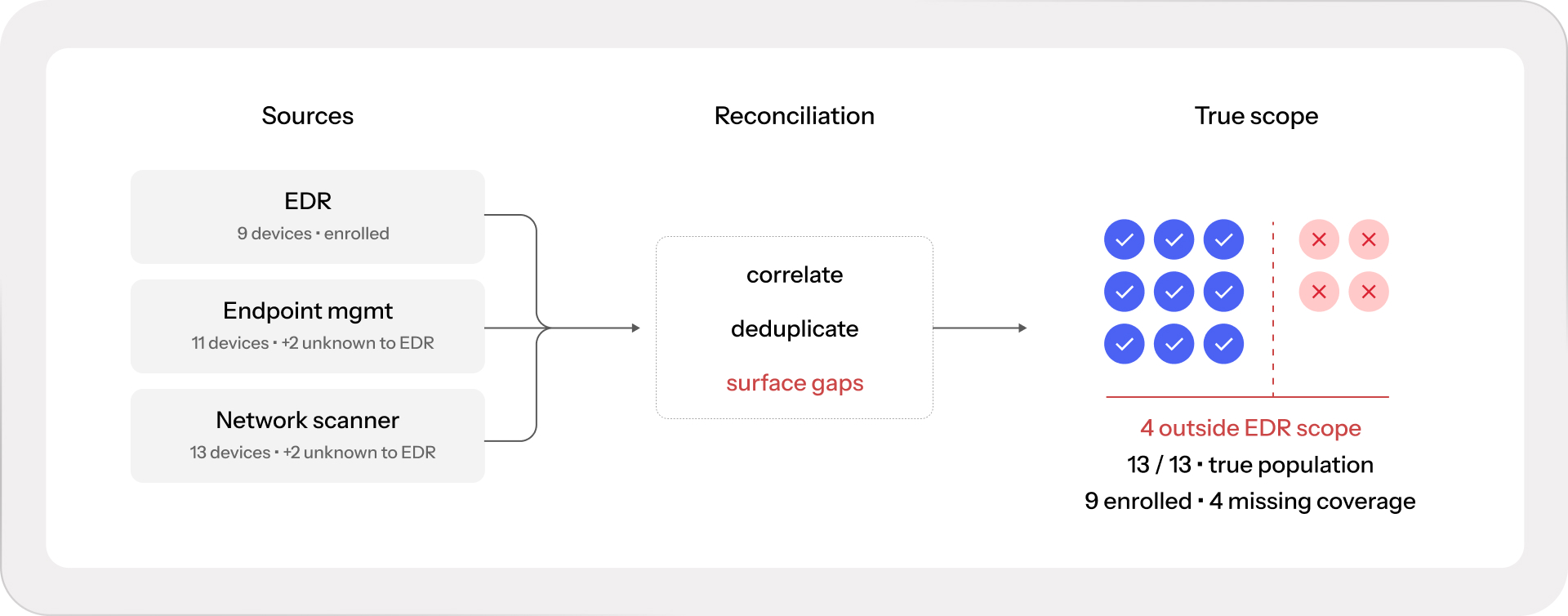

This is why the reconciliation layer matters in practice. Cross-referencing the EDR against endpoint management, the network scanner, the identity provider, and the cloud console produces a scope that no single tool could define. The coverage gaps only become visible when the full population is reconciled from above. What the EDR reports as complete coverage is, against the actual population, something measurably less.

Run that logic across every invariant in the program — agent coverage, MFA enforcement, SSO adoption, patch compliance, backup coverage. Durable context is the mechanism that turns policies into continuous proof.

The self-healing environment

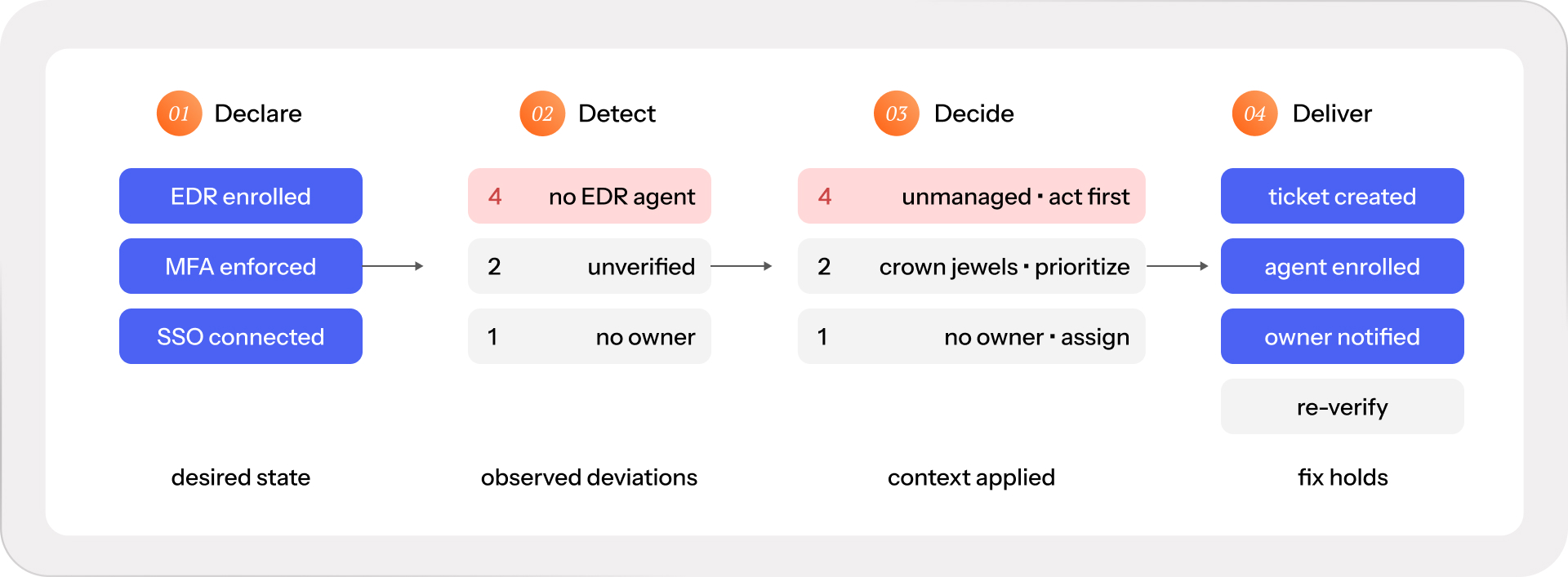

The end state of all of this is an environment that proactively corrects itself. A true self-healing environment needs a rhythm, “in the pocket” like James Brown’s backing band. Four beats:

Declare what you want to be true: every endpoint covered, every privileged account behind MFA, every critical system backed up.

Detect deviations from that declared state as they emerge: misconfigurations, policy violations, exposures, vulnerable software.

Decide whether the deviation matters: measure risk with the full asset and business context to prioritize effectively.

Deliver actions to restore the truth: through automation where the risk is low, through coordinated workflows where human judgment is required.

Feed it raw context, and each beat degrades. Declare good state against an incomplete picture, and the scope is wrong from the start. Detect against stale records, and deviations get missed or buried in false positives. Decide without full asset and business context, and the wrong things get prioritized. Deliver into a model with unresolved entity conflicts, and the right action lands on the wrong asset.

This matters more as security programs begin deploying agents to run parts of the loop autonomously. An agent inherits whatever context it's given. It has no independent way to verify that the ownership record it's acting on is current, that the asset it's remediating is the right one, or that the coverage state it's been told is complete actually is.

Durable context changes the whole equation. When the reconciliation layer is doing its job, each beat runs on conditions that are actually true, whether autonomous or not. This is what the context graph thesis was pointing toward all along. The accumulated reasoning behind decisions compounds correctly when the operational context underneath it is durable.

To close: durable context is what makes every output decision-grade.

Categories

- Security

Get Started

See how to make asset intelligence actionable with a guided demo:

- Stop chasing data — work from one asset model your entire team can trust.

- See what's exposed before it's a problem — surface coverage gaps automatically.

- Turn alert noise into action — cut thousands of alerts down, to the ones that matter.